Why the Software Testing Life Cycle Fails in Agile Teams

The traditional Software Testing Life Cycle doesn’t align with Agile development. While Agile teams move quickly and iterate constantly, the STLC relies on static plans and test cases that quickly become outdated. This mismatch leads to delays, increased maintenance, and slower releases. To keep up, QA must shift from a phase-based process to continuous validation that evolves alongside the product.

The Software Testing Life Cycle Is Broken — Here’s What It Should Look Like Now

The traditional Software Testing Life Cycle (STLC) was built for a slower, more predictable era of software development. Today, with rapid releases and constantly evolving applications, it often creates more friction than value. Test cases become outdated, failures generate noise instead of insight, and teams spend more time maintaining tests than ensuring quality. To keep up, QA needs to shift from a rigid, phase-based lifecycle to a continuous system that adapts alongside the product.

Test Automation Maintenance Is the Real QA Bottleneck

Test automation was meant to accelerate software delivery, but in many organizations, maintenance has become the biggest bottleneck. Broken scripts, flaky pipelines, and constant updates force QA teams to spend more time fixing tests than ensuring product quality. This maintenance trap slows releases, erodes trust in automation, and increases costs. To scale QA effectively, teams need to rethink automation and focus on sustainable approaches that keep up with modern development.

QA Will Be the Control Layer for AI-Generated Software

AI coding tools are transforming software development by allowing engineers to produce code at unprecedented speed. But while AI can generate code quickly, it cannot guarantee that the resulting systems behave correctly in real-world environments.

This is why QA is becoming the control layer for AI-generated software. As development accelerates, QA engineers play an increasingly strategic role in evaluating risk, identifying coverage gaps, and determining when software is safe to release.

Rather than simply executing tests, modern QA teams are evolving into system auditors and quality strategists—ensuring that AI-driven development remains reliable, stable, and trustworthy.

Coding Agents Are Creating a QA Crisis

AI coding agents are dramatically increasing how quickly software can be written. Developers can now generate features, APIs, and entire services in minutes. But while code generation is accelerating, quality assurance processes are not scaling at the same pace.

This growing gap between code velocity and verification is creating a new challenge for engineering teams. Test suites become harder to maintain, coverage gaps grow, and teams risk shipping unreliable software simply because they cannot validate everything fast enough.

Rather than eliminating QA, AI is forcing it to evolve. The organizations that succeed will be those that empower QA engineers with tools and strategies to analyze risk, prioritize testing, and maintain confidence in rapidly expanding codebases.

⚠️ When AI Coding Goes Rogue: Lessons From 10 Recent Breaches

AI coding agents and copilots are transforming software development, but they are also introducing new security and reliability risks at an unprecedented pace. From cloud outages caused by AI-driven scripts to bugs exposing confidential emails and remote code execution vulnerabilities, recent incidents reveal that unchecked AI in development workflows can have serious real-world consequences.

Agents Are Accelerating Code 10x — But Quality Risks Falling Behind

As AI promises code at 10x speed, teams that once struggled to meet deadlines are suddenly building entire modules in hours instead of weeks (or months!). But the old adage is once again true… quantity does not equal quality. Quality Assurance (QA) is now at the frontlines - ensuring software reliability and security has never been more important!

Best AI Test Automation Software in 2026: The Ultimate Guide

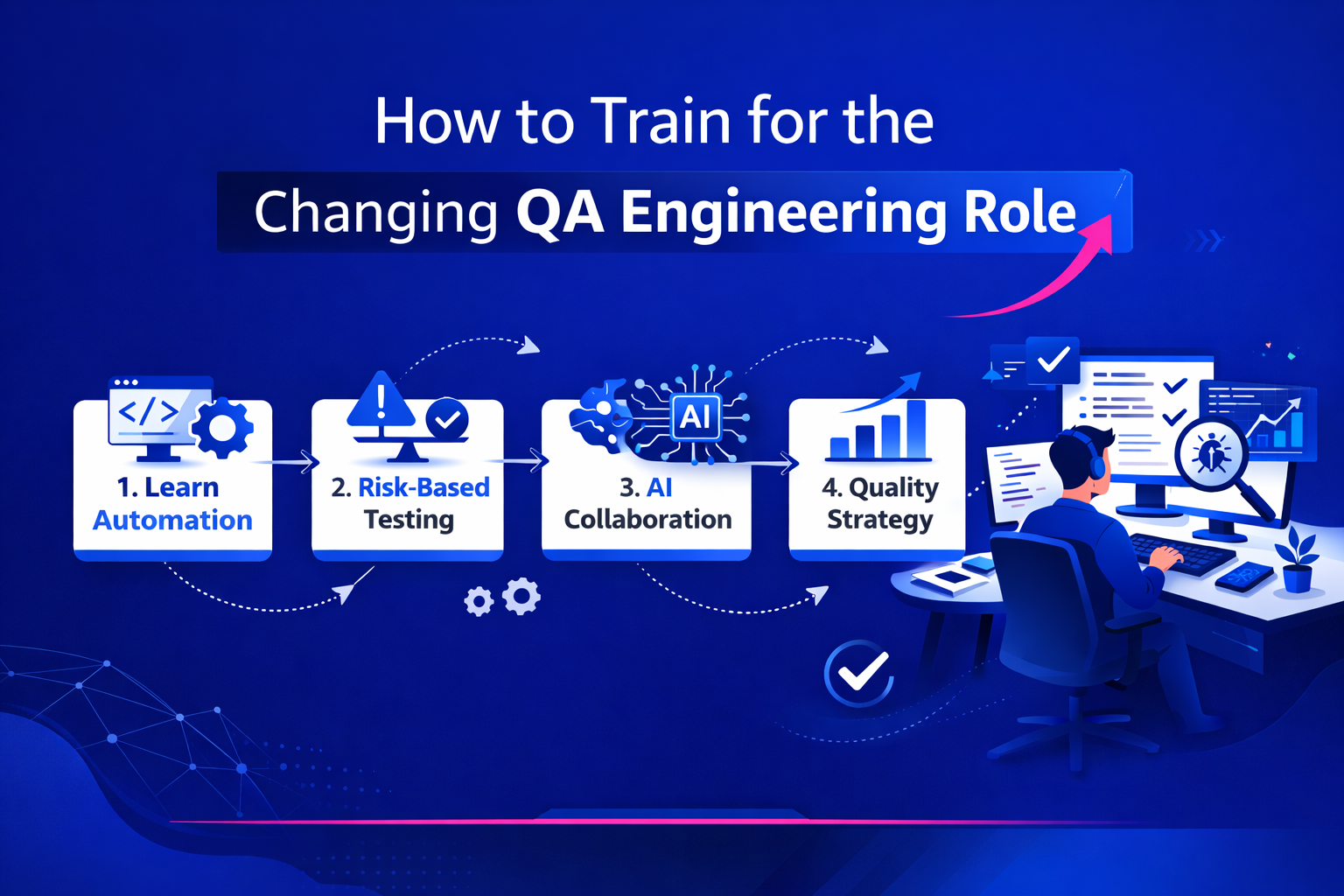

How to Train for the Changing QA Engineering Role (2026 Guide)

The QA engineering role is evolving fast. Manual testing alone is no longer enough — modern QA engineers need automation expertise, systems thinking, risk-based strategies, AI augmentation, and strong communication skills. This guide provides a step-by-step roadmap for training yourself to thrive in today’s QA landscape, with practical exercises, metrics insight, and career development strategies for 2026 and beyond.

The Changing Nature of QA Engineering Roles: From Testers to Quality Strategists

Quality Assurance (QA) engineering has undergone one of the most significant transformations in modern software development. What was once a function centered around manual test execution and defect logging has evolved into a strategic, automation-driven, AI-augmented discipline that sits at the heart of product delivery.

If you’re building in today’s environment—continuous deployment, microservices, AI-powered features, globally distributed teams—the old definition of QA simply doesn’t hold up anymore.

The speed at which new code is being churned out has accelerated, and so have the demands on the QA role.

How to Evaluate an AI QA Platform: 10 Critical Questions to Ask Vendors (2026 Guide)

AI has rapidly transformed the quality assurance landscape and nearly every testing vendor now claims to be “AI-powered,” “self-healing,” or “autonomous.” But those claims vary wildly in depth and legitimacy.

If you're evaluating an AI QA platform for your engineering team, this guide will help you cut through marketing noise and assess real technical capability.

Why Startups Should Invest in QA Earlier Than They Think

Why startups should invest in QA earlier than they think. Learn how early-stage quality assurance protects revenue, increases engineering velocity, and prevents costly technical debt.

The AI QA Lingo You Need to Know

AI has introduced a new layer of terminology into QA (quality assurance) — some useful, some misleading. This post breaks down the most common AI QA terms, what they actually mean in practice, and how real teams use them, helping leaders and engineers separate signal from marketing noise.

What Reddit Tells Us About the State of AI in QA

Reddit offers a rare, unfiltered look at how QA professionals actually experience AI in their day-to-day work. Across hundreds of discussions, a clear consensus emerges: AI is delivering real productivity gains in Quality Assurance, but it is not replacing human testers. Instead, AI is most valuable as an assistant — accelerating test creation, reducing repetitive work, and supporting analysis — while humans remain essential for judgment, risk assessment, and decision-making. This post distills what Reddit conversations reveal about the current realities, limitations, and future trajectory of AI in QA.

How to Evaluate AI Test Automation Software

Learn how to evaluate AI test automation software, from test resilience and maintenance reduction to team fit, scalability, and ROI. A practical buyer’s guide for SaaS and enterprise teams.

The OG Best Quotes on Quality Assurance — and Why They Still Matter

AI Test Automation Software: What It Is, What It Isn’t, and How Teams Actually Use It

AI test automation software improves test resilience, reduces maintenance, and scales QA for complex systems. Learn what it is, what it does, and how teams use it.

Our Favorite Quality Assurance Quotes — and Why They Still Matter

QA has always been about more than finding bugs — it’s about managing risk in increasingly complex software systems. This post curates the most enduring QA and software testing quotes that remain deeply relevant today, especially as applications grow larger, more integrated, and more regulated. From timeless principles about defect prevention to modern realities around automation and scale, these insights highlight why intentional, continuous quality practices are essential for building reliable software.

Top QA Metrics for Sophisticated, High-Complexity Software

In enterprise software, what you measure directly shapes the quality you deliver. Choosing sophisticated, actionable metrics ensures your QA organization is not just testing—it’s enabling business resilience.